The Great Deceleration

A Requiem for the Singularity

Part 1: Have we Passed Peak Science?

There have recently been renewed winds in the sails of the fashionable but moronic set of elites who believe fervently in the god of progress. We’re told artificial superintelligence will recursively solve all our problems, that we’ll be uploading consciousness to the computer soon enough. All the nauseating and by now old hat prophecies are returning. This is of course due to the proliferation of machine learning via publicly accessible LLMs. As a result, the Kurzweils and Hararis of the world are back “in the discourse”, but their darker cousins too, offering more disquieting eschatological excretions for which they are rabidly grabbing their popcorn. In a time of rapid digital communication, hyperscalers and so forth, people either lament the dizziness of the age or celebrate it and wish for us to rev up faster and see where it all takes us.

The increasing obsession with novelty and tech as an answer to everything is in fact a kind of cargo cult, a repetition and recycling of old futurologies, and in my opinion a form of escapist fantasy for an age in which, beneath the shiny sheen, we see everywhere aging and stagnation: in the arts, in the literal mean age of the population, in the economy, and even in the vaunted science and technology at which we are supposedly meant to marvel.

In the philosophical world there are three major critiques of progress. The first is the ethical critique, something I associate most strongly with the British philosopher John Gray. The argument is that there is no necessary connection between material and ethical improvement. One can think of the Houthis and their drones, of the “dual applications” of mass industrial production for both civilian and military purposes, of abundance existing alongside moral decay. The point is simple enough: modern technology and rising living standards do not somehow guarantee that a society will become better in any deeper or more profound sense.

The second critique is the consolidation problem. The vast metabolic scale of modern society produces immense waste streams while rapidly depleting underlying stocks. The thinker most readily associated with this view is of course Thomas Malthus, who argued that periods of peace and abundance themselves generate the conditions for future ecological constraint, misery, and death. In the modern era, environmental thinkers of various stripes have inherited and expanded this line of thought, asking not merely about the benefits of growth, but about its costs, limits, and ultimately its transience. It is this line of critique that I have been pursuing most deeply.

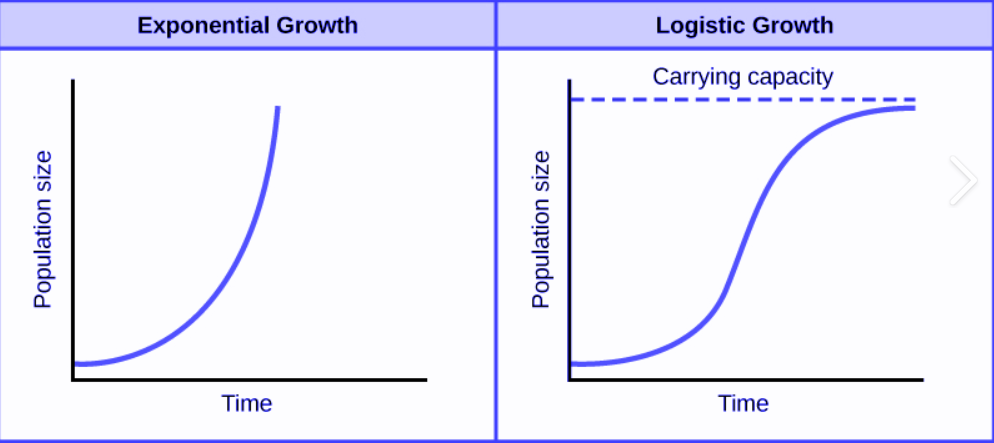

The final critique of progress is a direct attack on the assumptions of those who believe in unbounded and accelerating scientific and technical growth. It is a critique of the very notion of continual progress, of the assumption that the future returns of science and technology will necessarily resemble those of the past. One can picture this graphically. Most popular conceptions of progress in this domain implicitly assume either linear advance or perpetual exponential acceleration. These assumptions are embedded not only in mainstream economic forecasts of GDP growth, but also in the popular imagination of futurism itself, as though humanity will naturally and indefinitely progress from fire to the plough, from the steam engine to the aeroplane, from rockets to artificial superintelligence as an expected matter of course.

I think that once one applies Darwinian, naturalistic, and thermodynamic limits to science and technology, a very different picture emerges. The best model for understanding technological progression is not the straight line or the endlessly rising exponential curve, but the logistics curve.

The logistics curve was first identified in ecology through the study of populations in the wild, where one tends to observe rapid exponential growth followed by sharp deceleration as a population approaches the carrying capacity of its environment, it was also famously used by Hart (1945) to identify the pattern of the rapid rise then stagnation of patents in a particular technology. One might object that there is no obvious K, no clear carrying capacity, to impose upon science or technology, and perhaps in some ultimate global sense that is true. I also want to make clear that I agree with John Gray that the ability to produce a Titanium knee is indeed absolute progress, and that moral progress is total nonsense, the critique here isn’t that the accumulation of scientific and technical progress is an illusion, it’s about our expectations of its rate and scope of improvements.

The two fundamental theoretical limits that do exist on science are probability and thermodynamics. In scientific inquiry one cannot surpass a probability of 1, and in technology one cannot exceed, or even truly reach, an efficiency of 100 percent. Beyond these lie the more practical impediments: funding constraints, political pressures, institutional sclerosis, and sociological dynamics that can retard scientific and technological development.

In other words how you understand modernity comes down to whether you think we understand growth by each of these equations:

Modernity can without question be distinguished from the past by its astonishing, breakneck growth. Thus we can immediately discard option one, leaving us caught between options two and three. Modernity witnessed hyperbolic population growth, not merely a constant doubling time, but a continual acceleration in which each doubling occurred faster than the last. The transition from 0 to 1 billion people took vastly longer than that from 1 to 2 billion, the jump from 2 to 3 was faster still, and so on. Extrapolated forward, the trend appeared absurd: by the 1950s and 1960s it notionally implied an infinite population sometime in the mid twenty-first century. Yet the pattern reversed in the late 1960s, when annual population growth peaked at roughly 2.1 percent before beginning its long decline.

Economically, however, growth advanced even faster. Modernity therefore produced not only startling demographic expansion, but also rising material living standards for those populations. This transformation rested upon two foundations, one of which is widely acknowledged, the other far less so.

The first factor that enabled the explosion in both living standards and population from the early nineteenth century onward was the enormous expansion in the use of energy. Modern industrial, transport, electrical, and agricultural systems all depend upon the metabolism of energy, overwhelmingly derived from fossil carbon. The clearest way to understand this is ecologically. For a predator to survive, it must secure a positive energy return on investment from the animals it hunts, leaving enough surplus energy for reproduction.

Humanity became the Prometheus among the primates through successive revolutions in energy capture: first fire, then agriculture, then fossil fuels. Each marked a profound increase in our energy return on investment over the previous system, unlocking entirely new scales of population, production, and complexity.

The fact that energy is so often ignored is no coincidence. In agrarian societies, the greater part of the population was directly involved in the energy supply chain through food production. Discretionary activity, whether in art, science, culture, or recreation, was largely confined to a narrow elite while the peasant majority toiled to secure the energetic basis of society itself.

Modernity transformed this relationship through the collapse of energy’s visible share of labour and GDP, a collapse made possible by cheap energy and increasingly efficient machines. The energy sector remains inescapable and omnipresent, embedded within every industry and every corner of economic life, yet precisely because energy has become so abundant, efficient, and frictionless, it fades from conscious attention. Energy blindness is as characteristic of modernity as energy abundance (Tainter et al 2018).

The second great aspect of modernity is human ingenuity. It was human ingenuity, expressed through science and technology, that produced the marvels of modern chemistry and physics, but also advances in hygiene, medicine, and industry. Not only did population soar, but remarkably the number of scientists per capita increased alongside it. The scale of progress was genuinely earthshattering and often dizzying in its pace.

Physics before Isaac Newton appears almost worthless by comparison with what followed. Generation after generation produced larger scientific communities than the last, each convinced, rightly, that it stood on the front line of the greatest and most pressing questions yet confronted. Across virtually every field, human knowledge and technical capability advanced by almost orders of magnitude within the span of a single generation.

One question that has remained at the forefront of modern consciousness is the finitude of the earth: its finite carrying capacity, its limited ability to absorb waste, and its constrained capacity to regenerate underlying stocks. One of the genuinely astonishing achievements of modernity, and the fact which most famously cost Paul Ehrlich his wager with Julian Simon, is that commodity prices did not undergo the sustained explosion many expected. Instead, over the long run, commodity prices have remained remarkably stable.

This stability reflects the clash of two opposing forces. The first is what Cutler Cleveland (2008) termed the “best first principle.” When exploiting natural resources, we naturally extract the highest quality and most accessible deposits first, only later being forced toward poorer, more remote, and more difficult reserves. We move from vast gushing conventional oil fields to deposits buried deep beneath the ocean, trapped within shale rock, or locked in low-quality bituminous sands. We move from copper ores visible to the naked eye to concentrations measured in mere parts per million.

This, however, collides directly with human ingenuity. We become more efficient at extraction, deploy larger quantities of cheap energy, and develop new tools capable of exploiting entirely new classes of reserves, such that in the end the price remains broadly stable. The inflationary pressure exerted by finite nature clashes against the deflationary pressure of innovation, and thus far the two forces have remained locked in an uneasy stalemate (Jacks 2013).

I am deeply sceptical that this balance can persist indefinitely. I remain an unapologetic peak oiler, and countless other finite stocks are also being managed recklessly in service to the insatiable engine of economic growth. But I do not want to relitigate that debate here, because it belongs more properly to the second critique of progress.

The question I am turning to is not one of the stocks and flows of matter and energy, but of ideas themselves. What if we have already harvested the low-hanging fruit of science and technology? What if sustaining the rapid progress associated with modernity becomes harder and harder over time? I believe this is precisely what is happening. I believe we are living through the great deceleration, the exhaustion of modernity itself. It is for this reason that I do not believe in the kinds of futures currently being sold to us.

It is hardly an original insight that accelerationism is, at root, a desperate attempt to escape the physical world, something nowhere more obvious than in fantasies of uploading consciousness into a computer. I do not believe in the grand pretensions of humanity, whether they arrive clothed as democracy, communism, or Christianity. Techno-optimism is simply the latest incarnation of the same impulse, deriving its peculiar strength from the fact that it can be embraced equally by supposed cynics like Peter Thiel and by the fairy-tale utopianism of Fully Automated Luxury Communism.

The accelerationist imagines himself riding some immense historical wave, when in reality he is often doing little more than free advertising for dominant capital, however inadvertently, while searching for an external locus of identity in whatever happens to be the latest thing.

The insight that scientific progress is subject to diminishing returns was stated with remarkable precision by C.S. Peirce in a note written for the United States Coast Survey in 1876. Peirce’s starting point was simple: the progress of any research programme is marked by the diminution of the probable error of its results. Each successive reduction in error has a cost. As investigation proceeds, additions to knowledge cost more and more while simultaneously being of less and less worth (Peirce, 1879 [1967], p. 644).

The illustration Peirce chose was chemistry. When chemistry came into being, he observed, Dr Wollaston, with a few test tubes and phials on a tea-tray, was able to make discoveries of the greatest moment. By contrast, a thousand chemists with the most elaborate appliances cannot reach results comparable in interest with those early ones (Peirce, 1879 [1967], p. 644). All the sciences, Peirce noted, exhibit the same phenomenon.

Peirce derived a principle of increasing effort formally. He showed that the cost of increasing precision, when observations are multiplied, grows proportionally to the square of the precision achieved (Peirce, 1879 [1967], p. 644). The implication is that research faces diminishing and specifically accelerating costs for equivalent increments of knowledge. The urgency fraction, the ratio of utility to cost, falls systematically as a field is worked out, and rational allocation of effort should shift towards fresher, less-depleted problems. But all problems eventually saturate, and the frontier itself narrows. The point Peirce was making was not that progress was doomed to end, but that the ratio of effort to scientific results of previous generations was an adolescent phase and is completely irrecoverable.

Peirce also observed, with a wry precision that cuts to the heart of the modern research enterprise, that his theory rests on the supposition that the object of investigation is the ascertainment of truth. When investigation is made for the purpose of attaining personal distinction, the economics of the problem are entirely different, but that, he noted, seems to be well enough understood by those engaged in that sort of investigation (Peirce, 1879 [1967], p. 648).

The next great writer to recognise this problem was Dereck De Solla Price. De Solla Price’s central empirical finding was that every measurable index of science, journals, papers, abstracts, manpower, important discoveries, had grown exponentially for two to three centuries, doubling in size every ten to fifteen years (de Solla Price, 1963, p. 5). The crude size of science in manpower or publications doubled within ten to fifteen years regardless of how it was measured; quality-filtered measures stretched the doubling time to around twenty years. The result was the peculiar immediacy of modern science: because science had been doubling every fifteen years and a scientist’s working life spans roughly forty-five years, at any moment roughly 80 to 90 percent of all scientists who had ever lived were still alive (de Solla Price, 1963, pp. 1–2). Every generation of scientists felt itself to be living through the decisive period of science, because it was always true.

Exponential growth in any finite system must eventually become logistic. It must trace an S-curve, passing through a point of inflection and approaching a ceiling (de Solla Price, 1963, p. 19). The arithmetic was brutal: scientists and engineers already constituted a couple of percent of the US labour force; research and development expenditure was a comparable fraction of GDP. Another two orders of magnitude of growth, he observed, would require two scientists for every man, woman, child and dog in the population, and twice the national income to pay for them. Scientific doomsday, he calculated, was less than a century away from the time of his writing (de Solla Price, 1963, p. 19).

More specifically, de Solla Price suggested that the midpoint of the logistic curve for science as a whole: the inflection at which exponential growth gives way to the decelerating upper half of the S had probably been passed somewhere during the 1940s or 1950s (de Solla Price, 1963, p. 31). Big Science, in his account, was not evidence of continuing exponential vitality but the characteristic syndrome of a system approaching saturation: escalating costs, growing teams, institutional overhead, and the frantic competitive dynamics of a domain running out of easy problems. He wasn’t a Spenglerian mystic of any sort, saturation seldom implies death, but rather the beginning of new tactics for science, operating with quite new ground rules (de Solla Price, 1963, p. 32).

We’ve seen the conceptual ground of scientific inquiries and the kind of logic it follows. But in technology the limit is thermodynamics. LED lighting is the paradigmatic example. Luminous efficacy has improved dramatically over recent decades, but it is now approaching the theoretical maximum set by the physics of photon emission: the fraction of electrical energy that can be converted to visible light is bounded by quantum mechanics. Haitz’ law for LEDs, that has successfully forecast orders of magnitude falls in costs per lumen has delivered extraordinary gains, but the gains trace an S-curve with a thermodynamically fixed ceiling, not an open-ended exponential. Nordhaus-style calculations of the declining cost per lumen, often used to illustrate the inexorability of technological progress, are implicitly tracking the middle of this S-curve. The gains are real, but improvements in light efficiency have gone from massive leaps and bounds to practically unnoticeable.

The same logic applies, with different parameters, to heat engines, electric motors, data transmission, photovoltaic conversion, and fertiliser use efficiency. In each case there is a theoretical limit defined by thermodynamics or quantum mechanics, and in each case the trajectory of improvement is a logistic curve approaching that limit rather than an open exponential.

Nicholas Rescher formalised the reflections and insights of Max Planck in his principle of increasing effort. Max Planck had lived through the early wild years of quantum physics and relativity down to their maturation, and noticed year by year how it became harder and harder to solve problems as it progressed. The easier problems were tackled first and subsequently it required more people and more sophisticated equipment to make the same level of results(Rescher, 1978, 1980, cited in Strumsky et al., 2010, pp. 499–500).

De Solla Price documented the financial expression of this principle directly. While the number of scientists doubled every ten to fifteen years, research expenditure in constant dollars was doubling every five and a half years, meaning the cost per scientist was itself doubling every decade (de Solla Price, 1963, p. 93). More strikingly still, he derived that research expenditure was increasing approximately as the square of the number of scientists, and therefore as the fourth power of the number of genuinely productive ones (de Solla Price, 1963, p. 93). Johnson and Milton, investigating research records across industry, universities and government institutions, found that over a decade in which total costs increased by a factor of four and a half, the output of research and development no more than doubled (de Solla Price, 1963, p. 94).

Strumsky, Lobo and Tainter (2010) frame the same dynamic in terms of complexity. Complexity, defined in the anthropological sense as increasing differentiation and specialisation in structure combined with increasing integration of parts, generates what Kauffman called the complexity catastrophe: as the intensity of interconnectivity in a research problem grows, it becomes harder and harder to develop good, let alone optimal, solutions (Strumsky et al., 2010, p. 498). The transition from the age of the lone naturalist to the age of the team: from the Darwin working with a notebook to the pharmaceutical trial requiring hundreds of researchers, clinical sites, regulatory submissions and statisticians cannot possibly simply be a sociological shift but rather the maturation of a field. It is the institutional expression of Peirce's accelerating cost of precision.

De Solla Price observed an additional and troubling corollary of this process. As science expanded and began drawing on a larger fraction of the potential scientific talent pool, it moved progressively further down the distribution of scientific ability. Early in a field's development, only those with the strongest intrinsic motivation and highest ability entered; the ripe apples fell of their own accord from the tree. As the enterprise grew, increasingly active inducements ,salaries, status, institutional support, were required to recruit scientists who would not otherwise have been drawn to the profession. This meant, in his account, that increased expenditure on science produced returns that were increasing in absolute output but declining in output per unit of investment. The exponential growth in science was starting to compete with profitable professions because of the increased requirement for manpower, which made further gains in manpower disproportionately difficult (de Solla Price, 1963, pp. 102–103).

Part 2: Quantifying the Great Deceleration

A. Qualitative studies

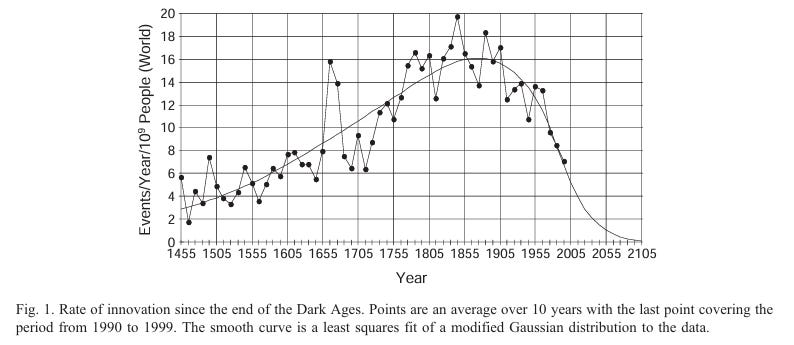

What I mean by qualitative studies here are studies which attempt to apply scientific rigor to the qualitative distinction between a “major” breakthrough and a minor one, and then track the historical progression of such breakthroughs. Huebner (2005) in his nice little paper did so by taking the data from 8583 major scientific and technical events, examining the most recent 7198 for his timescale, these being taken from the landmark study on the history of science and technology by Bunch and Hellemans (2004). And he produced a graph which showed a sharp per capita increase in innovation which peaked in the late nineteenth century and declined continually. I think that the upslope here is very impressive because the per capita increase despite sharp sudden population increase captures scientific modernity but possibly there could be confounding variables on the downslope.

(Figure 1 from Huebner 2005)

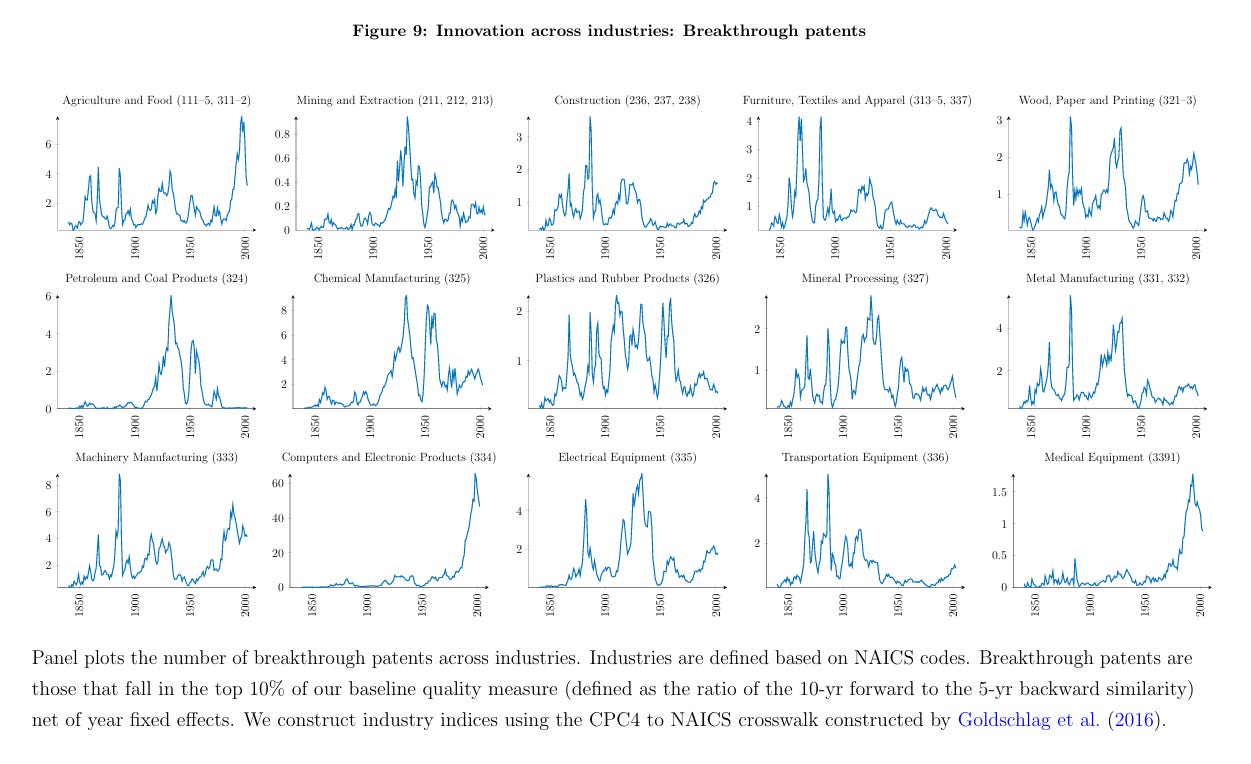

The major modern study which followed this approach was Kelly et al (2020). They examined huge amounts of patent data and made a methodology for what was determined as a “Breakthrough patent” and found that more or less all technical fields had passed their peak in terms of major patents prior to 1950, with the exceptions of medical technology and and computing. They analysed 9 million US patents from 1840-2010 and systematically analysed how novel a patent was and the influence it had on subsequent patents through a full analysis of each document.

(Figure 9 Kelly et al 2020)

B. Patents and Combinatorics

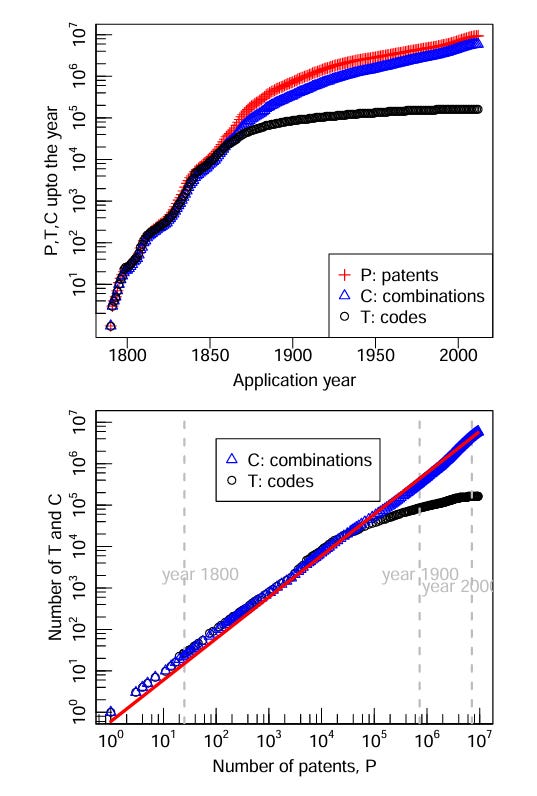

Academic literature on the stagnation of scientific progress has been around for some time now and within that time an interesting trend has in fact reversed. Griliches (1994) and Kortum (1997) both noted that patents per inventor sharply plummeted between the 1920s and 1990s. However, this just as sharply has reversed. Does this mean that we’ve reversed the trend of decline? No it doesn’t. What it signals is the rise of combinatorics relative to original technology codes. Youn et al. (2014) provide the most rigorous examination of this claim, drawing on US patent records from 1790 to 2010.

Their central finding is that 77 percent of all patents granted across this period are classified using combinations of at least two technology codes, and that this proportion has risen to around 88 percent in recent decades (Youn et al., 2014, p. 5). More strikingly, the introduction of new combinations proceeds at a remarkably stable rate: approximately 60 percent of new patents instantiate a combination of technological capabilities not previously seen in the patent record, while 40 percent reuse existing combinations (Youn et al., 2014, p. 7). This is genuinely impressive. The combinatorial inventive process has maintained a near-constant rate of novelty generation across two centuries.

The crucial qualification, however, is that after around 1870 the introduction of entirely new technological capabilities, new technology codes representing genuinely novel technological vocabularies, slowed dramatically, while invention continued almost entirely through recombination of the existing repertoire (Youn et al., 2014, pp. 6–7, Figure 1). After approximately 150,000 technological functionalities had accumulated by the late nineteenth century, the increase in inventions proceeded with little addition to the existing stock (Youn et al., 2014, p. 8). Since around 1970, moreover, the proportion of patents involving narrow combinations, technologies drawn from within a single class, representing incremental refinement rather than cross-domain breakthrough, has risen back to around 50 percent (Youn et al., 2014, p. 11, Figure 5).

(Youn et al 2014 Figure 1)

This is the combinatorial form of the logistic curve: a practically infinite space of possible new arrangements of existing elements, explored with undiminished energy, but built on a vocabulary of foundational capabilities that stopped expanding a century and a half ago. The impressive patent statistics reflect the geometric expansion of a recombinant space, not the continued discovery of new scientific foundations. The system is in the upper half of its S-curve, generating enormous numbers of new combinations of what it already knows.

C. Research Productivity

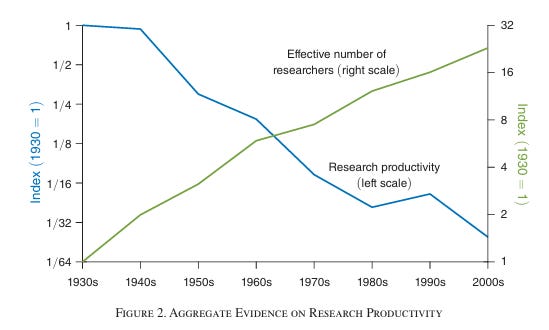

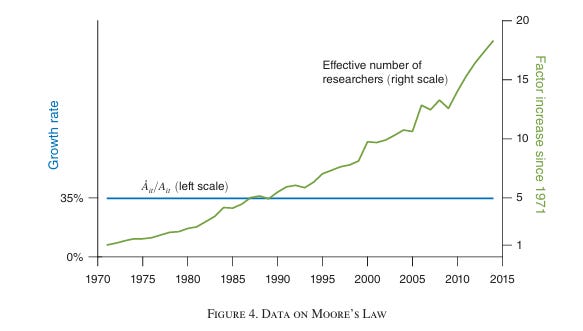

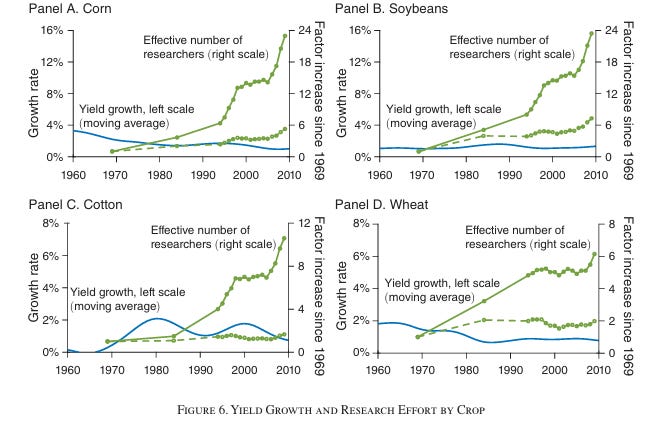

Bloom et al. (2020) provide the most methodologically rigorous account of falling research productivity across the entire economy. Their approach, comparing research inputs with measurable outputs in domains where the frontier is precisely definable, is designed precisely to avoid confounding the quantity of research activity with its productivity.

In semiconductors, the number of researchers required to sustain Moore’s Law has risen more than eighteen-fold since the early 1970s, implying a 7 percent annual decline in research productivity (Bloom et al., 2020, pp. 1105–1106). In agriculture, yield growth for major crops remains roughly 1–2 percent per year while the effective number of researchers has risen by factors ranging from 6 to over 25, producing 4–10 percent annual declines in research productivity (pp. 1119–1122). In medicine, approvals of new molecular entities remain flat while pharmaceutical R&D expenditure rises sharply, yielding a five- to eleven-fold fall in research productivity (p. 1123). At the firm level, Compustat and Census data reveal 8–10 percent annual declines (p. 1105). At the aggregate level, US research productivity has fallen forty-one-fold since the 1930s, an average decline of 5.1 percent per year, even as total factor productivity growth has remained broadly stable (pp. 1110–1111). The paper’s conclusion is stark: ideas are getting dramatically harder to find, and modern growth persists only because society continually expands research effort to offset this pervasive decline.

(Figures 2,4 and 6 from Bloom et al 2020)

This constitutes perhaps the clearest confirmation of the lines of thought pursued by Charles Sanders Peirce and Derek J. de Solla Price. We have witnessed continued growth in measures such as Moore’s Law and total factor productivity, yet maintaining those same rates of advance now requires vastly greater expenditures of money, labour, and institutional effort. The data presented in Nicholas Bloom et al. imply that the number of scientists must double roughly every thirteen years merely to sustain constant rates of growth. In a post-Keynesian economic framework, this aligns naturally with “rise and fall of growth” models such as those advanced by Robert J. Gordon (2016) . If, however, one also takes depletion and ecology seriously, the implications appear considerably bleaker.

The evidence is now broad, cumulative, and remarkably consistent. Research productivity appears to be declining across domains and by virtually every metric available. The vocabulary of genuinely new technological capabilities has expanded only marginally since the late nineteenth century. Breakthroughs are increasingly concentrated in narrow refinements within already established paradigms. Research teams grow ever larger and more expensive while producing less on a per-researcher basis. Bloom et al. estimate an aggregate forty-one-fold decline in U.S. research productivity since the 1930s, even while total factor productivity itself remains broadly stable. We are running faster to remain in the same place.

None of this amounts to a declaration of the death of science. I am very glad to live in the age of the Large Hadron Collider rather than the age of Isaac Newton. But does not that immense and staggeringly expensive machine, together with the countless scientists required to operate it, stand in rather stark contrast to the comparatively limited theoretical progress achieved in fundamental physics since the 1970s? Is it not, in its own way, an obvious image of the great deceleration?

Conclusion

The problem is not science or technology, but man himself. Humanity constructed the extraordinary edifice of modernity. The difficulty is that its continued prosperity depends upon the assumption that the historically exceptional conditions which created it, abundant fossil fuels and rapid techno-industrial expansion can continue indefinitely at roughly the same pace. I believe humanity faces a very different trajectory: one in which we are compelled to confront our limits and learn to live within our means, as stewards and guardians.

Yet such a vision is difficult even to imagine being implemented, because it requires genuine introspection about the mistakes we have made and recognition that we have constructed a system fundamentally not built to endure. More precisely, it is a system premised upon the continuation of infinite growth on a finite planet, coupled with the assumption that science and technology will indefinitely rescue us from the consequences of our own excesses.

We are told that we must always become more efficient, always discover substitutions, always accelerate onward, never pausing long enough to ask why we are doing any of this in the first place. Global warming commands such immense attention partly because it flatters our hubris. We imagine ourselves creating planetary problems through our excess and then solving them through still greater excess and still more technology. We behave as though we are not animals embedded in the soil and dependent upon water, energy, and ecology, that we are detached selectors of computer avatars, choosing programmes to run upon an infinitely malleable world to be shaped entirely according to our will.

Problems of depletion are frightening precisely because they are so banal and so avoidable. They arise simply from an almost total unwillingness to relinquish present comfort and consumption in favour of preserving resources, both for the natural world and for those who will come after us.

The idea of infinite, unbounded, and perpetually accelerating scientific progress rests upon a carefully constructed fantasy born out of something historically real and extraordinary: for a significant period, practically every major technical and scientific field genuinely was exploding with rapid advances. But we have passed that point. What we see now are rarer and more sporadic sparks concentrated in a handful of sectors, while much of the world’s remaining growth derives from the diffusion and adoption of technologies that already exist. We are living through a period of deceleration.

Science itself is something I hold deeply dear, and its achievements remain astonishingly real. I have not given up on the future. I simply do not believe it will be a future in which humanity indefinitely evades fate knocking at the door. Techno-optimism is a form of magical thinking. It fails to take real science and real limits seriously, and it escapes the scrutiny directed towards traditional religious eschatologies only because it clothes itself in the language of technology rather than theology.

Reference List

Bloom, N., Jones, C.I., Van Reenen, J. and Webb, M. (2020) ‘Are ideas getting harder to find?’, American Economic Review, 110(4), pp. 1104–1144.

Cleveland, C.J. (2008) ‘Natural resource quality’, in Cleveland, C.J. (ed.) The Encyclopedia of Earth.

De Solla Price, D. (1963) Little Science, Big Science. New York: Columbia University Press.

Gordon, R.J. (2016) The rise and fall of American growth: The U.S. standard of living since the Civil War. Princeton, NJ: Princeton University Press.

Griliches, Z. (1994) ‘Productivity, R&D and the data constraint’, American Economic Review, 84(1), pp. 1–23.

Hart, H. (1945) ‘Logistic social trends’, American Journal of Sociology, 50(4), pp. 337–352.

Huebner, J. (2005) ‘A possible declining trend for worldwide innovation’, Technological Forecasting and Social Change, 72(8), pp. 980–986.

Jacks, D.S. (2013) From Boom to Bust: A Typology of Real Commodity Prices in the Long Run. National Bureau of Economic Research Working Paper Series 18874. doi:10.3386/w18874.

Kortum, S. (1997) ‘Research, patenting, and technological change’, Econometrica, 65(6), pp. 1389–1419.

Peirce, C.S. (1879 [1967]) ‘Note on the theory of the economy of research’, Operations Research, 15(4), pp. 643–648.

Rescher, N. (1978) Scientific Progress: A Philosophical Essay on the Economics of Research in Natural Science. Pittsburgh: University of Pittsburgh Press.

Rescher, N. (1980) Unpopular Essays on Technological Progress. Pittsburgh: University of Pittsburgh Press.

Strumsky, D., Lobo, J. and Tainter, J.A. (2010) ‘Complexity and the productivity of innovation’, Systems Research and Behavioral Science, 27(5), pp. 496–509.

Tainter, J.A. (1988) The Collapse of Complex Societies. Cambridge: Cambridge University Press.

Tainter, J.A., Strumsky, D., Taylor, T.G., Arnold, M. and Lobo, J. (2018) ‘Depletion vs innovation: the fundamental question of sustainability’, in Burlando, R. and Tartaglia, A. (eds.) Physical Limits to Economic Growth. Abingdon: Routledge, pp. 65–94.

Youn, H., Bettencourt, L.M.A., Strumsky, D. and Lobo, J. (2014) ‘Invention as a combinatorial process: evidence from U.S. patents’, arXiv. Available at: arXiv:1406.2938.

“You don't get it bro. Embryo selection, gene editing and pronatalism will raise the mean IQ of the population to 140 and we will be able to double or even triple the number of productive researchers every decade. Trust the tech oligarchs.”

This is generally agreeable with what work I've done and the education I've received in material sciences.

I look at people who complain that useful papers have been declining for a long time in proportion, and perhaps it may be that useful papers are just too difficult to produce. Every scientist could be acting in good faith and still coming up short due to hard physical limits. And the only issue with the scientific system is that perhaps it isn't built with this fact in mind.